The era of "malicious compliance" in AI identity is here

States passed laws requiring AI to identify itself. Companies responded with invisible footers and ToS updates. Disclosure has failed. We need legibility.

Full article excerpt tap to expand

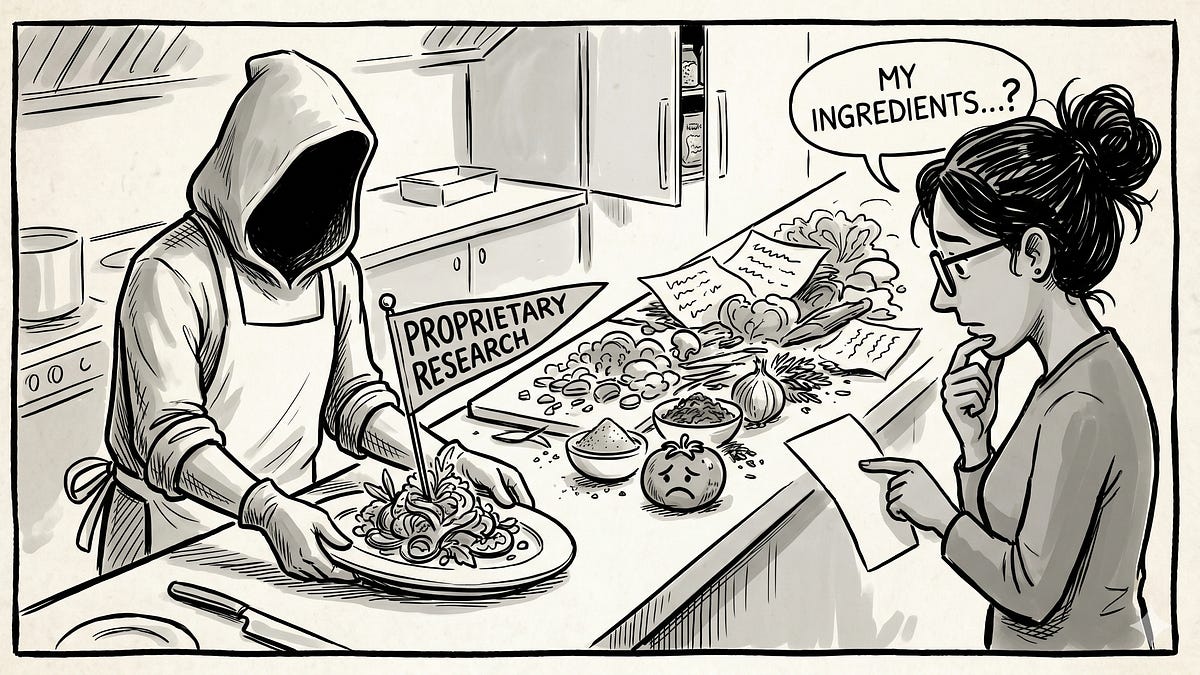

The era of "malicious compliance" in AI identity is here.tates passed laws requiring AI to identify itself. Companies responded with invisible footers and ToS updates. Disclosure has failed. We need legibility.Ariel SakinApr 28, 20261ShareA few months ago I started warning friends and colleagues that the internet was about to lose its primary identity primitive. I said that if we didn’t establish a structural way to separate humans from autonomous agents, the compliance industry would build a web of loopholes so dense we’d never find our way out.I was wrong about the timeline. I thought it would take 18 months. It took exactly the time it takes to push a Terms of Service update.We are now living in the era of Malicious AI Compliance. I’m calling it that here so the term has somewhere to point.Agents are commenting on your posts, applying to your job listings, arguing with you in community forums, and sliding into your professional networks. And yes, legally, they are “disclosing” what they are.If you squint at the 8-point gray font at the bottom of a threaded reply.If you remember the single pop-up you clicked “Accept” on 40 days ago.If you read section 4.b of the enterprise EULA.We built the internet with one assumption baked into the identity layer: the @ handle belongs to a person. That assumption is now entirely broken, and the laws we passed to fix it are actively making it worse."I was wrong about the timeline. I thought it would take 18 months. It took exactly the time it takes to push a Terms of Service update."The failure of “Reasonable Belief”When Nebraska passed the Conversational AI Safety Act (LB 525) in April 2026, and other states began following suit, the intention was good. In fact, parts of it are genuinely excellent legislation - the crisis protocols will save lives, and the protections for minors are real and meaningful.The law requires AI to disclose itself when a user “reasonably could believe they are interacting with a human being.” Fine words. Optimized away in 30 days.The law treats identity as an point in time problem. It assumes that as long as a platform told you once, somewhere, somehow, that an AI was involved, the obligation was met.The industry immediately optimized for the lowest-effort approach to still hide in plain sight.Here is what “compliance” looks like today:The Ghost Footer: A disclosure appended to the bottom of a post that gets truncated by the platform’s UI (”Read more...”). I’ve watched this pattern deploy across at least three major social platforms in the last two months.The Implied Consent Trap: A branded chatbot named “Alex” where the company argues no reasonable person would think Alex is human, therefore bypassing the active disclosure requirement entirely. The pattern is in production at Fortune 500 enterprise SaaS vendors I won’t name here, but if you’ve talked to a customer-support “Alex” or “Aria” in the last 60 days, you’ve seen it.The API Wash: Agents operating through standard enterprise tools where the disclosure is buried in the B2B platform’s service agreement, completely invisible to the end-user interacting with the output.The problem is not disclosure, It’s legibilityLegibility is not a moment, it is a property of a system.Think about what @ meant when the internet was designed. It meant a person. Not a verified ID, but a human being sitting somewhere, reading and writing. The handle was the primitive.That primitive has been colonized. Agents have @ handles. They are, by…

This excerpt is published under fair use for community discussion. Read the full article at Substack.