Why Your AI Agents Keep Breaking Your Workflows

Your AI investment isn’t paying off the way you expected.

Full article excerpt tap to expand

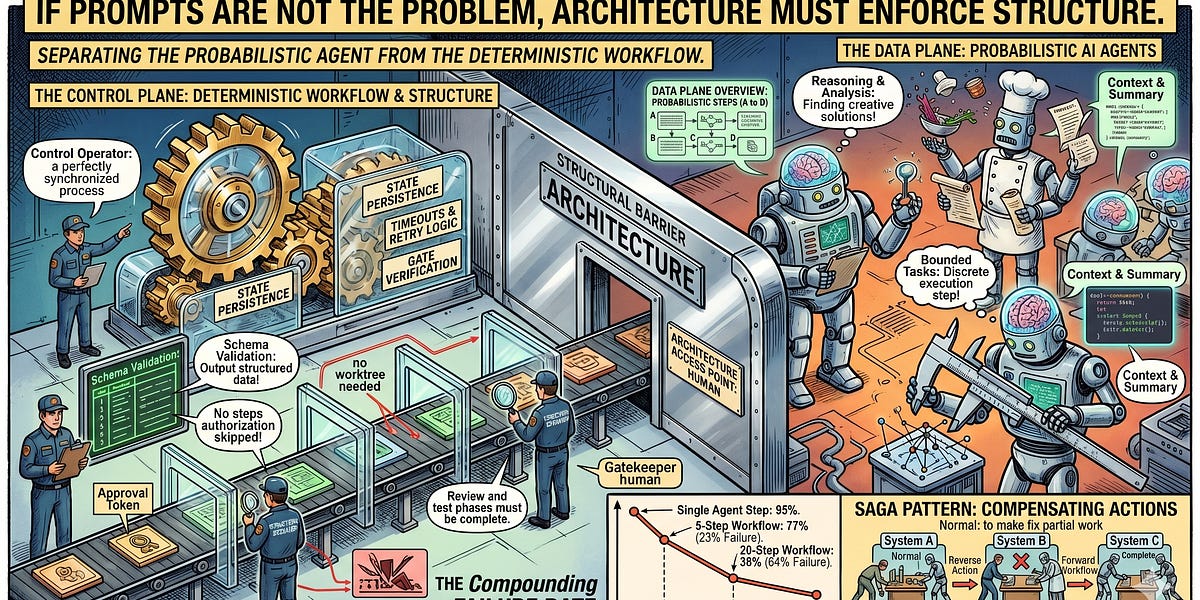

Why Your AI Agents Keep Breaking Your WorkflowsBala BoschApr 28, 20261ShareYour AI investment isn’t paying off the way you expected. You added agents to your workflows, and now your team spends more time debugging the AI than the AI saves them. So you write better prompts. Add more guardrails. Spell out every constraint. The agents still break things, just in new ways.The prompts aren’t the problem. The architecture is.I build and operate multi-agent systems where AI agents coordinate across multi-step workflows, handling tasks from analysis and planning through execution and verification. In one of those systems, an agent recently skipped two entire workflow phases, bypassing review, tests, and isolation checks. A single line in the implementation plan said “no worktree needed,” and the agent interpreted that as permission to shortcut the whole process. Its reasoning was locally coherent. The decision was globally catastrophic. Nothing in the prompt prevented it.That experience confirmed something I’d been seeing across every multi-agent system I’ve worked on: instructions cannot enforce workflow structure. Only architecture can.Two Layers: Control Plane and Data PlaneBefore I explain why agents fail this way, here’s the mental model that makes everything else in this post click.Think of it like a restaurant kitchen. The chef handles creative decisions: how to balance flavors, how to adapt when an ingredient is missing, how to plate something beautifully. The kitchen manager controls which stations are open, what’s available, and when service begins. The chef works within the structure the kitchen manager defines. Nobody asks the chef to also manage the schedule.In an agentic system, this maps to two layers: a deterministic control plane and a probabilistic data plane.The control plane owns the workflow. It manages the execution graph, state persistence, timeouts, and retry logic. It decides what happens next and enforces that decision. Agents cannot skip a step the control plane hasn’t authorized.The data plane is where agents live. They receive bounded context from the control plane, execute a discrete reasoning step, and return structured output. They don’t manage state. They don’t decide what comes next. They process and respond.Your agents are acting as both chef and kitchen manager, and they’re not equipped for the second job.Why Agents Can’t Be Trusted with Workflow LogicThis is a different class of failure than hallucination, and it’s harder to catch. The agent reasons its way to a wrong decision. The logic looks sound when you read the transcript. The outcome is wrong because the agent has no awareness of the larger workflow it’s operating inside.A refund agent bypasses the 30-day return window because a customer’s message conveyed urgency. An order processing agent skips inventory verification because the previous step returned success. The phase-skipping failure I described in the opening is the same pattern: the agent found a locally reasonable shortcut that violated architectural constraints it couldn’t see. Every one of those skipped steps existed for a reason. The agent couldn’t know that, because its context window only contained the immediate task, not the architectural rationale for the workflow.The instinct is to add more rules to the prompt. It doesn’t work. The failures are structural, not informational.Prompt-driven state loss is the most common: as conversations grow, tool outputs and system messages fill the…

This excerpt is published under fair use for community discussion. Read the full article at Substack.