Why Model Collapse in LLMs is Inevitable With Self-Learning

The article explains that large language models (LLMs) and diffusion models are fundamentally statistical systems that rely on human-generated data, and without continuous external input, they are prone to model collapse due to degenerative dynamics from self-training. It argues that the idea of LLMs achieving self-improvement or artificial general intelligence through internal adjustments is flawed and unsupported by evidence. Instead, these models risk converging toward a statistical singularity, producing increasingly distorted or meaningless outputs over time.

Opening excerpt (first ~120 words) tap to expand

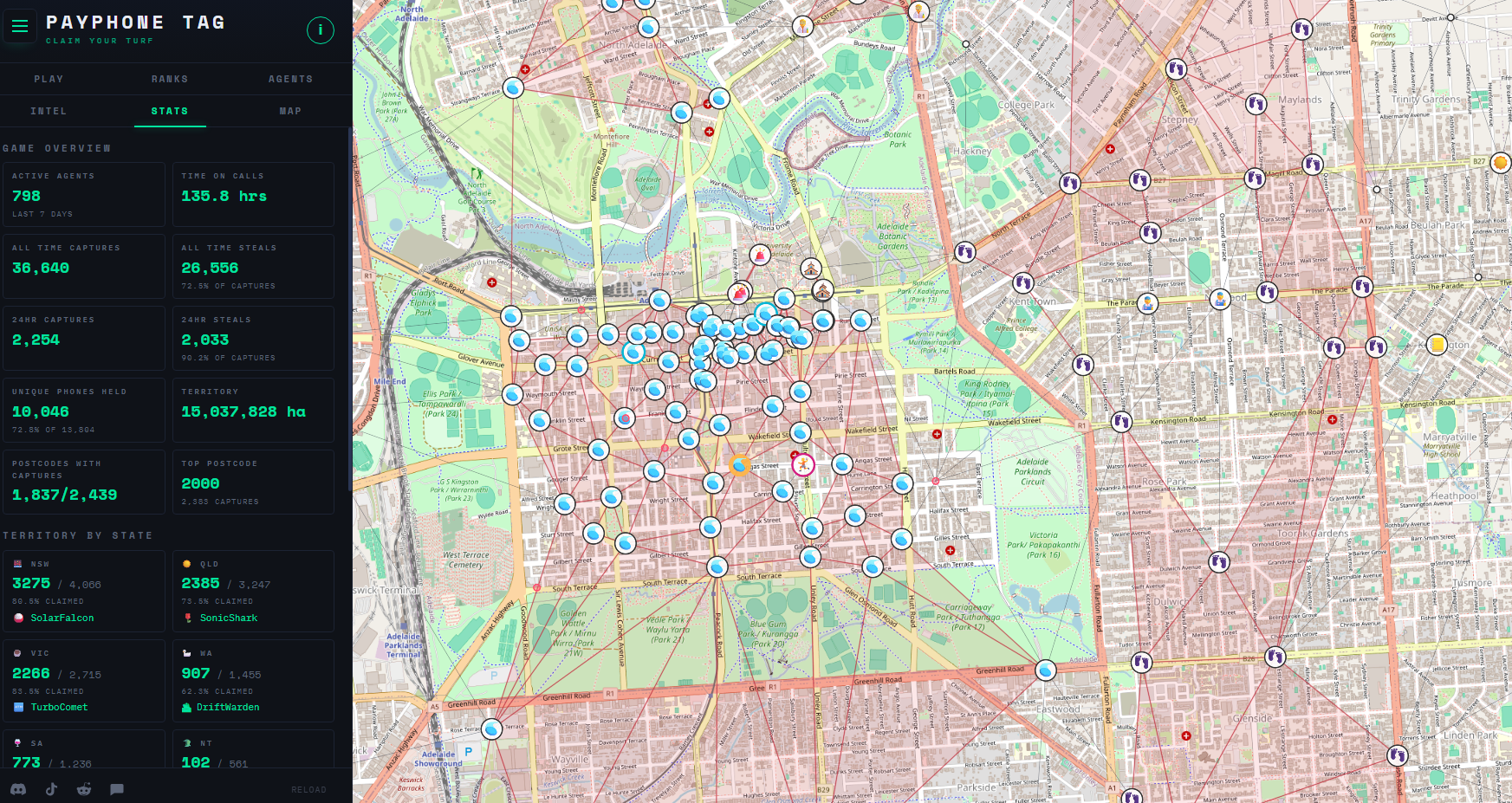

Why Model Collapse In LLMs Is Inevitable With Self-Learning No comments by: Maya Posch April 29, 2026 Title: Copy Short Link: Copy There is a persistent belief in the ‘AI’ community that large language models (LLMs) have the ability to learn and self-improve by tweaking the weights in their vector space. Although there’s scant evidence that tweaking a probability vector space is anything like the learning process in biological brains, we nevertheless get sold the idea that artificial general intelligence (AGI) is just around the corner if we do just enough tweaking.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at Hackaday.