Claude Cowork Now Runs Any LLM. Test It Free

OpenAI, Gemma, Kimi K2, or run locally. Free via OpenRouter. Anthropic shipped it quietly.

Full article excerpt tap to expand

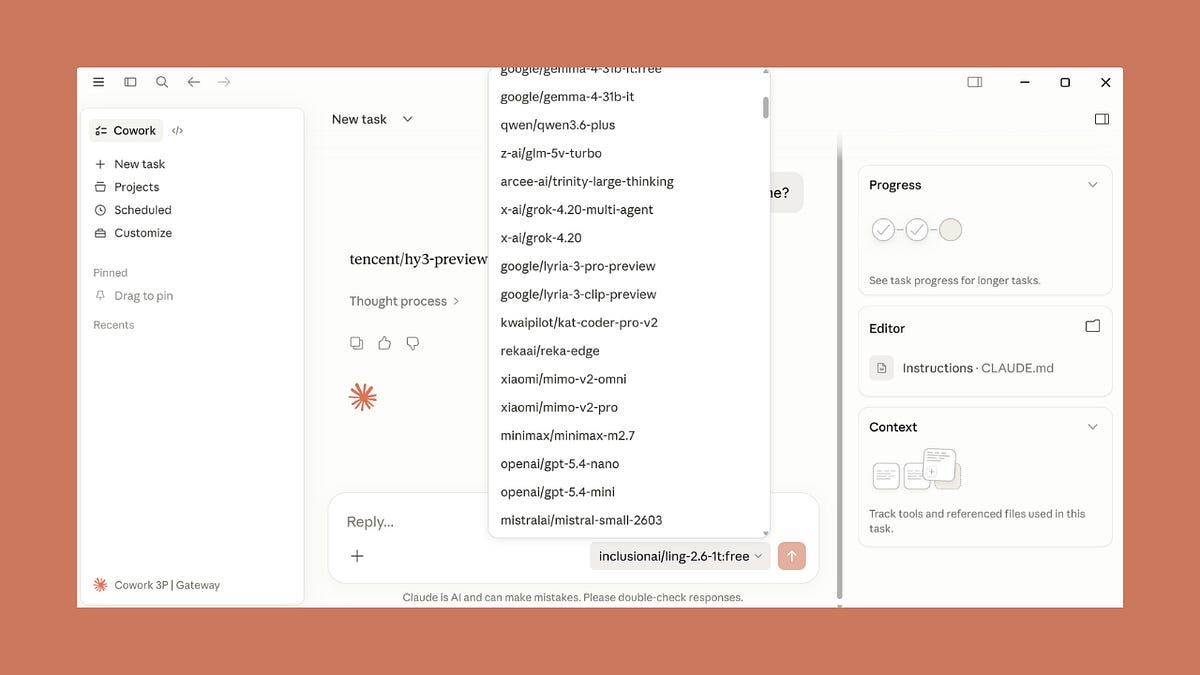

Claude Cowork Now Runs Any LLM. Test It Free.OpenAI, Gemma, Kimi K2, or run locally. Free via OpenRouter. Anthropic shipped it quietly.Paweł HurynApr 23, 20269734ShareYou can now run Claude Cowork and Code in Claude Desktop against any LLM.GPT-5, Grok, Gemini, open-weight models via OpenRouter, a local model on your laptop, or your enterprise gateway (Bedrock, Vertex, Foundry).Anthropic shipped it quietly. No announcement. No blog post. Just technical docs. I discovered it by accident. 20+ hours later, still zero official word.Paweł Huryn@PawelHurynAnthropic has quietly shipped third-party inference for Cowork and Code in Claude Desktop. This should work with local models or OpenRouter via LiteLLM proxy. Is it just me? 7:46 PM · Apr 22, 2026 · 530K Views46 Replies · 68 Reposts · 947 Likes1. What It Actually IsBuilt for enterprises and individuals. Same config panel, same admin controls.If your organization uses Amazon Bedrock, Google Cloud Vertex AI, Azure AI Foundry, or an LLM gateway to access Claude, you can deploy Claude Cowork to run on the same infrastructure.This is a research preview. The official docs cover three paths: regulated industriescompanies running Claude through their own gatewayindividuals on pilot/evaluation setupsAny individual can install it today.Every admin control (per-user token caps, MCP allowlist, OpenTelemetry, block auto-updates, remove built-in tools) ships on all three paths.OpenRouter counts as an LLM gateway. Anthropic doesn’t list it in the docs, but I tested that it works. Confirmed by the OpenRouter CEO:Alex Atallah@alexatallahOpenRouter now works in Claude Cowork!Paweł Huryn @PawelHurynAnthropic has quietly shipped third-party inference for Cowork and Code in Claude Desktop. This should work with local models or OpenRouter via LiteLLM proxy. Is it just me?2:43 AM · Apr 23, 2026 · 43.9K Views11 Replies · 7 Reposts · 231 LikesBrief summary:Foundry: during this research preview, Claude on Foundry still runs on Anthropic’s infrastructure. Bedrock and Vertex are the paths with full provider-side data residency.2. Who This Is ForTwo audiences. Same config, different endpoint.Individuals:Hitting Max plan weekly limitsWant to try Cowork without paying $100 or $200 a monthRunning a local model on code you can’t send to any APIEnterprise:Already on Bedrock, Vertex, or Foundry. Keep the harness inside your existing compliance boundarySecurity approves your cloud provider but not Anthropic directNeed team-wide admin controls (token caps, MCP allowlist, OpenTelemetry)Side note: Find this helpful? Here are some other Claude posts you may have missed:Claude Cowork: The Ultimate Guide for PMsThe Guide to Claude Code for PMsWhat I Learned Building a Self-Improving Agentic System with ClaudeThe Claude Dispatch Guide: 48 Hours Running AI Agents From My PhoneThree CLAUDE.md Blocks That Make Claude Get Smarter Every SessionThe Ultimate Guide to Claude Opus 4.7Subscribe and upgrade your account for the full experience:Subscribe3. Enable & Configure3.1 Connect to OpenRouterNo proxy needed. Point Claude Desktop straight at OpenRouter.Menu → Developer → Configure Third-Party InferenceSet:Connection: GatewayGateway base URL: https://openrouter.ai/apiGateway API key: your OpenRouter keyGateway auth scheme: x-api-keyIn the Sandbox & workspace configure "Allowed egress hosts" so that your agents can access the web. For example (all sites):Click: Apply locally → Relaunch nowLog out, then Continue with Gateway:You’ll see…

This excerpt is published under fair use for community discussion. Read the full article at Productcompass.