The HIPAA Violations Hiding in Your Team's Browser History

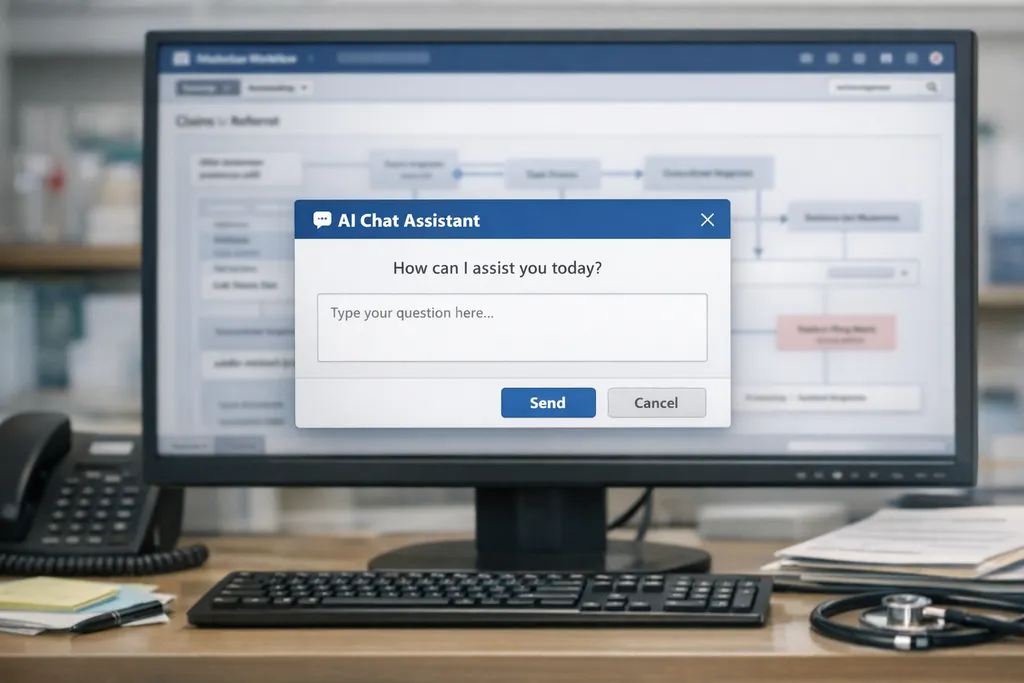

Healthcare workers are unintentionally violating HIPAA by using AI tools like ChatGPT and Copilot to assist with tasks, often pasting protected health information into public platforms without realizing the risk. Existing security controls frequently fail to detect these actions because AI usage blends in with normal browser activity and productivity software. The real issue lies not in employee intent but in the lack of infrastructure to monitor, prevent, or audit AI-related data exposure in real time.

Opening excerpt (first ~120 words) tap to expand

A billing clerk is trying to clear a backlog before lunch. She copies part of a claim into ChatGPT to clean up the language and help with coding. The text includes a date of birth, an MRN, and a diagnosis. She is not trying to cut corners. She is trying to move faster and get the work done. No alert fires. No workflow stops. No one gets notified. This is what shadow AI looks like in healthcare. A lot of organizations still think their biggest AI risk lives inside the tools they have formally reviewed. The bigger problem is often the one they have not reviewed. It lives in the browser, inside routine work, in moments that feel harmless to the person doing them. Most of the people creating this risk do not think of themselves as taking a risk at all.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at Three Gates.