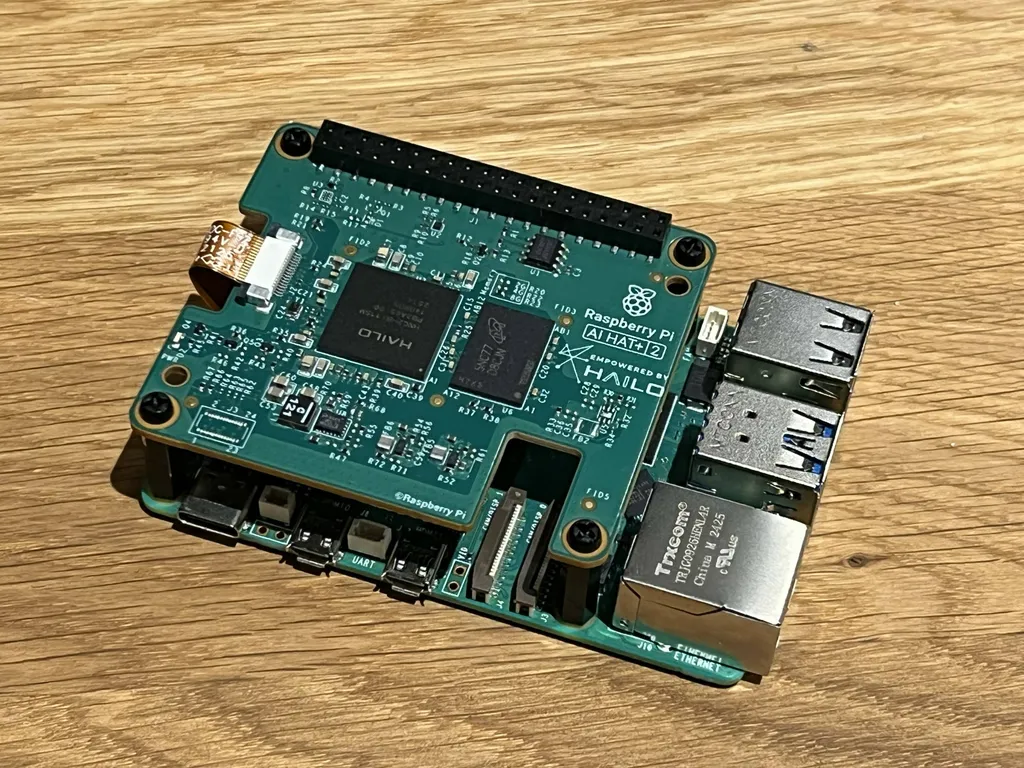

Raspberry Pi 5 gets LLM smarts with AI HAT+ 2

Raspberry Pi has released the AI HAT+ 2, an add-on board featuring 40 TOPS of inference performance and 8 GB of onboard RAM for running local AI models. It uses the Hailo-10H accelerator to support large language models, vision models, and generative AI tasks. While it offloads AI processing from the Pi's main system, its value is questioned given the availability of higher-memory Pi models and cheaper alternatives.

- ▪The AI HAT+ 2 delivers 40 TOPS (INT4) of inference performance using the Hailo-10H neural network accelerator.

- ▪It includes 8 GB of onboard RAM and connects via the Raspberry Pi's GPIO and PCIe interface.

- ▪The board supports models like Qwen2, Llama3.2, and DeepSeek-R10-Distill at launch, with native integration into Raspberry Pi OS.

- ▪Computer vision performance remains at 26 TOPS (INT4), similar to the previous AI HAT+ model.

- ▪Priced at $130, it faces competition from the older AI HAT+ and the $70 AI camera for vision tasks.

Opening excerpt (first ~120 words) tap to expand

AI + ML 29 Raspberry Pi 5 gets LLM smarts with AI HAT+ 2 29 40 TOPS of inference grunt, 8 GB onboard memory, and the nagging question: who exactly needs this? Richard Speed Thu 15 Jan 2026 // 11:49 UTC Raspberry Pi has launched the AI HAT+ 2 with 8 GB of onboard RAM and the Hailo-10H neural network accelerator aimed at local AI computing. On paper, the specifications look great. The AI HAT+ 2 delivers 40 TOPS (INT4) of inference performance. The Hailo-10H silicon is designed to accelerate large language models (LLMs), vision language models (VLMs), and "other generative AI applications." Computer vision performance is roughly on a par with the 26 TOPS (INT4) of the previous AI HAT+ model.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at Theregister.