NVIDIA Launches Nemotron 3 Nano Omni Model, Unifying Vision, Audio and Language for up to 9x More Efficient AI Agents

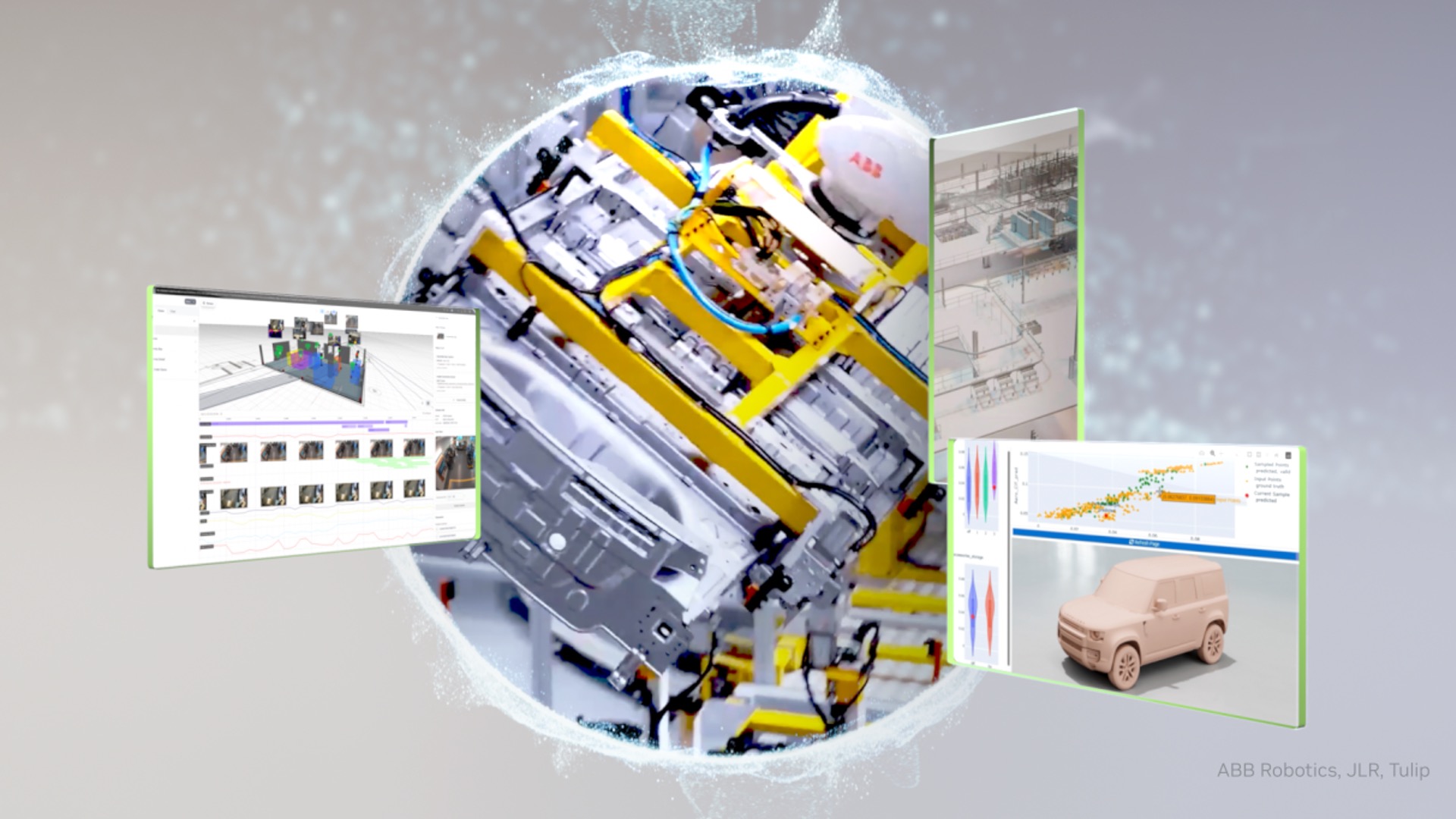

NVIDIA has launched Nemotron 3 Nano Omni, an open multimodal AI model that integrates vision, audio, and language processing into a single efficient system, enabling up to 9x higher throughput for AI agents. The model supports high-resolution inputs and is designed for enterprise applications like document intelligence, computer use agents, and audio-video reasoning. It operates as a perception sub-agent within larger agentic workflows and is deployable across edge, data center, and cloud environments. Released with open weights and tools for customization, it offers full control for regulated or domain-specific use cases.

Opening excerpt (first ~120 words) tap to expand

NVIDIA Launches Nemotron 3 Nano Omni Model, Unifying Vision, Audio and Language for up to 9x More Efficient AI Agents Best-in-class open omni-modal reasoning model delivers the highest efficiency and accuracy to power agentic workflows such as computer use, document intelligence and audio-video reasoning. April 28, 2026 by Kari Briski 0 Comments Share Share This Article X Facebook LinkedIn Copy link Link copied! AI agent systems today juggle separate models for vision, speech and language — losing time and context as they pass data from one model to the other.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at NVIDIA Blog.