LLM 0.32a0 is a major backwards-compatible refactor

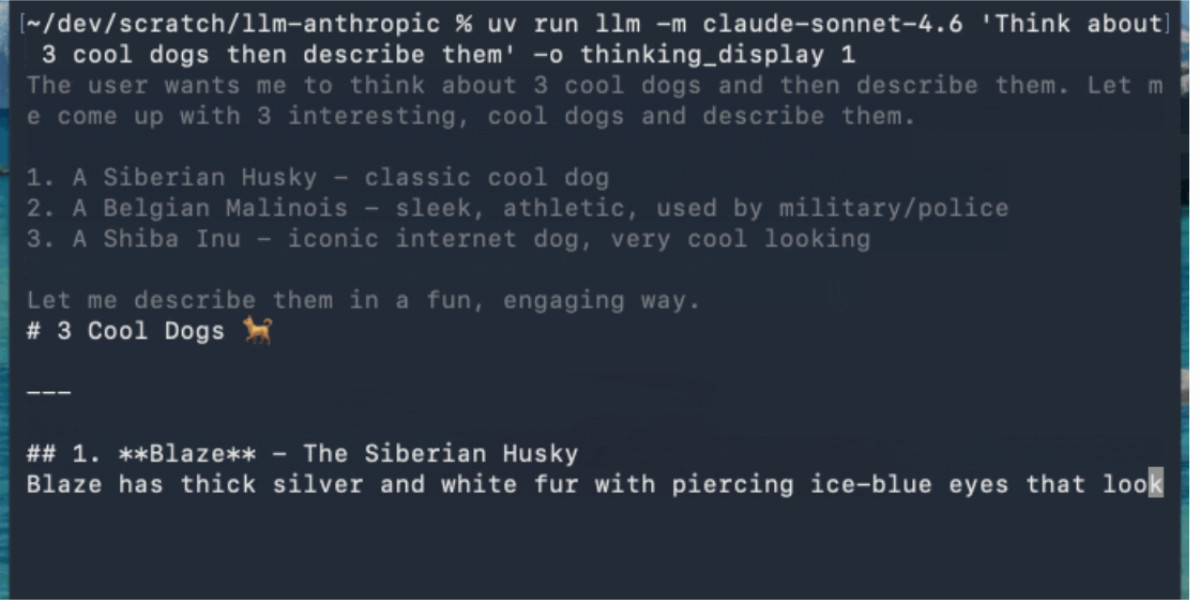

LLM 0.32a0 is an alpha release of the LLM Python library and CLI tool that introduces a major backwards-compatible refactor to better support diverse input and output types in modern language models. The update shifts from a simple prompt-response model to one that supports sequences of messages and streaming responses with multiple content types. These changes aim to align the library with current LLM capabilities such as multi-turn conversations, structured outputs, and multimodal processing.

Opening excerpt (first ~120 words) tap to expand

LLM 0.32a0 is a major backwards-compatible refactor 29th April 2026 I just released LLM 0.32a0, an alpha release of my LLM Python library and CLI tool for accessing LLMs, with some consequential changes that I’ve been working towards for quite a while. Previous versions of LLM modeled the world in terms of prompts and responses. Send the model a text prompt, get back a text response. import llm model = llm.get_model("gpt-5.5") response = model.prompt("Capital of France?") print(response.text()) This made sense when I started working on the library back in April 2023. A lot has changed since then! LLM provides an abstraction over thousands of different models via its plugin system.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at Simon Willison’s Weblog.