AI in an Age of Humanity

The article explores the evolving relationship between artificial intelligence and military applications, highlighting OpenAI's shift from banning military use of its technology to signing a contract with the US government. It draws parallels between fictional narratives like Ender’s Game and real-world developments, emphasizing the ethical challenges and unintended consequences of AI deployment. The piece questions whether AI creators can control how their technologies are used, especially as governments integrate AI into defense systems despite stated restrictions.

Opening excerpt (first ~120 words) tap to expand

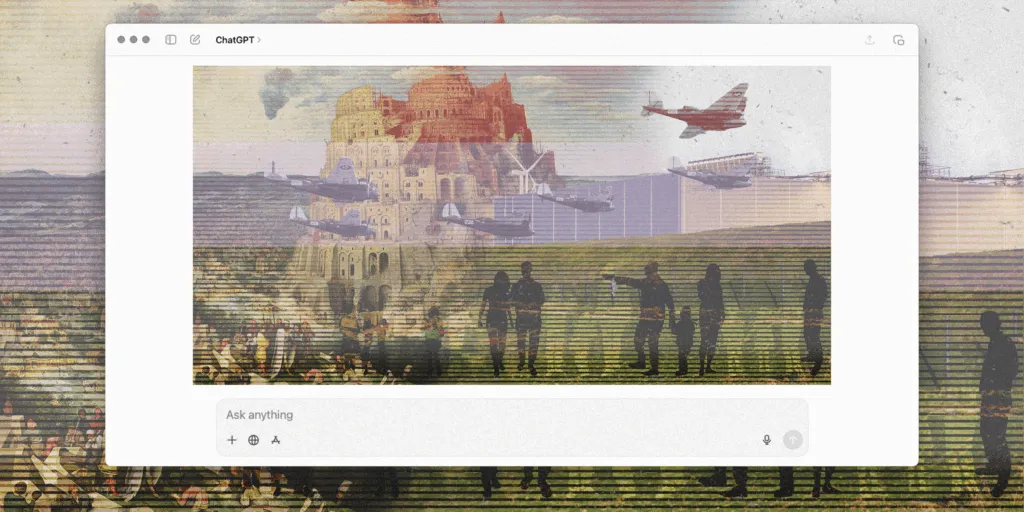

Art by Beck & Stone. Forum May 1, 2026 AI in an Age of Humanity Spencer A. Klavan The good and the bad news is, we're in charge. The beloved 1985 young-adult novel Ender’s Game tells the story of a boy who thinks he’s practicing to kill aliens using a computer simulation, until he discovers he was remotely piloting real ships and hitting real targets all along. Now, in 2026, there is a program at the US Department of Defense called Ender’s Foundry. It exists to simulate battle scenarios and “ensure we stay ahead of AI-enabled adversaries.” The irony of this is probably not lost on Sam Altman, CEO of OpenAI (the company behind ChatGPT). Until very recently, OpenAI’s official corporate policy banned the use of its programs for military purposes.

…

Excerpt limited to ~120 words for fair-use compliance. The full article is at Law & Liberty.

.png%3Ftrim%3D0%2C0%2C0%2C0%26width%3D1200%26height%3D800%26crop%3D1200%3A800&w=320)