A new Moore's Law for AI agents

The length of tasks that agents can do is growing exponentially

Full article excerpt tap to expand

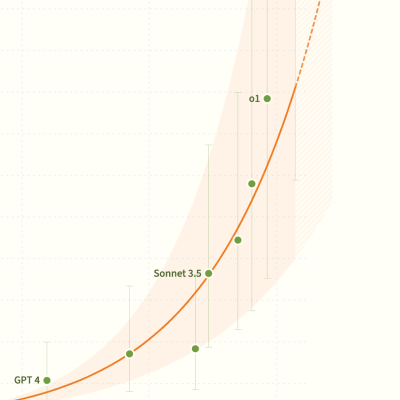

Last updated March 2026A new Moore's Law for AI agents When ChatGPT came out in 2022, it could do 30 second coding tasks. Today, AI agents can autonomously do coding tasks that take humans over fourteen hours.Scroll to continueThe length of coding tasks frontier systems can complete is growing exponentially – doubling every 7 months.This trend was discovered by researchers at METR. They took the most capable agents from 2019 to 2026, and tested them on about 230 tasks: mostly coding tasks, with some on general reasoning. Then, they compared the agent's success rate to the length of each task – how long it takes human professionals to complete, ranging from under 30 seconds to over 8 hours. Across all models tested, two clear patterns emerged: Task length is highly correlated with agent success rate (R² = 0.83) The length of tasks that agents succeed at 50% of the time – the time horizon – is growing exponentially What comes next? This exponential trend seems robust, and there's no evidence of plateauing. Exponentials grow fast. Extrapolating out, this trend predicts: 2027: 1 work day (8 hours) 2028: 1 work week (40 hours) 2029: 1 work month (167 hours) Recently, the trend has accelerated. In 2024-2025, time horizons doubled every 4 months, down from every 7 months over 2019-2025.If the faster trend continues, agents might reach month-long tasks in 2027. However, looking at just one year's data gives a less robust estimate. The rate of progress might slow down.It might also speed up. Given that the trend has already sped up, it could be on a growth trajectory that's faster than exponential. This fits intuitively: there might be a bigger gap in required skills between 1 and 2 week tasks, than 1 and 2 year tasks. Additionally, as AIs improve they'll be increasingly useful for developing yet more capable AIs. This could also lead to superexponential growth in AIs' time horizons.Increasingly capable AI systems could trigger a flywheel of acceleration – agents speeding up the creation of more capable agents, which speed up the creation of more capable agents. From here, agent capabilities might skyrocket beyond any human's abilities in AI research – and across many or all other domains. The effects would be transformative. If automating AI research leads to progress this fast, the rapidly increasing time horizon of AI systems might end up being one of the most important trends in human history.Stay ahead of the curveSubscribeOur latest AI explainers and demos, in your inbox once a monthMore from AI DigestDemosAI VillageWatch frontier AIs interact with each other and the worldApr 2, 2025 by Zak Miller, Shoshannah Tekofsky and Adam BinksmithLatest updatesCan Agents Fool Each Other?March 25, 2026Findings from the AI VillageThe Drama and Dysfunction of Gemini 2.5 and 3 ProFebruary 13, 2026Field notes from the AI Village: a guest postWhat did we learn from the AI Village in 2025?February 2, 2026Lessons from 9 months running frontier agents on open-ended real-world goalsWhat Do We Tell the Humans?November 21, 2025Errors, hallucinations, and lies in the AI VillageResearch Robots: When AIs Experiment on UsOctober 7, 2025A story of a lot of ambition and a lost experimental conditionThe AI Village in NumbersSeptember 24, 2025OpenAI offers most polite, most cheerful, and most eloquent modelThe Persona-lities of the AI VillageSeptember 5, 2025Insights from 100s of hours of character growthClaude Plays... Whatever it WantsAugust 28, 2025Lessons from…

This excerpt is published under fair use for community discussion. Read the full article at AI Digest.