A maintenance agent: 412 fixed, 14 refused. The 14 are the point

A maintenance agent ran 10 days: 559 bugs filed, 412 fixed, 14 refused. The useful number is what it wouldn't touch — and why that makes it trustworthy.

Full article excerpt tap to expand

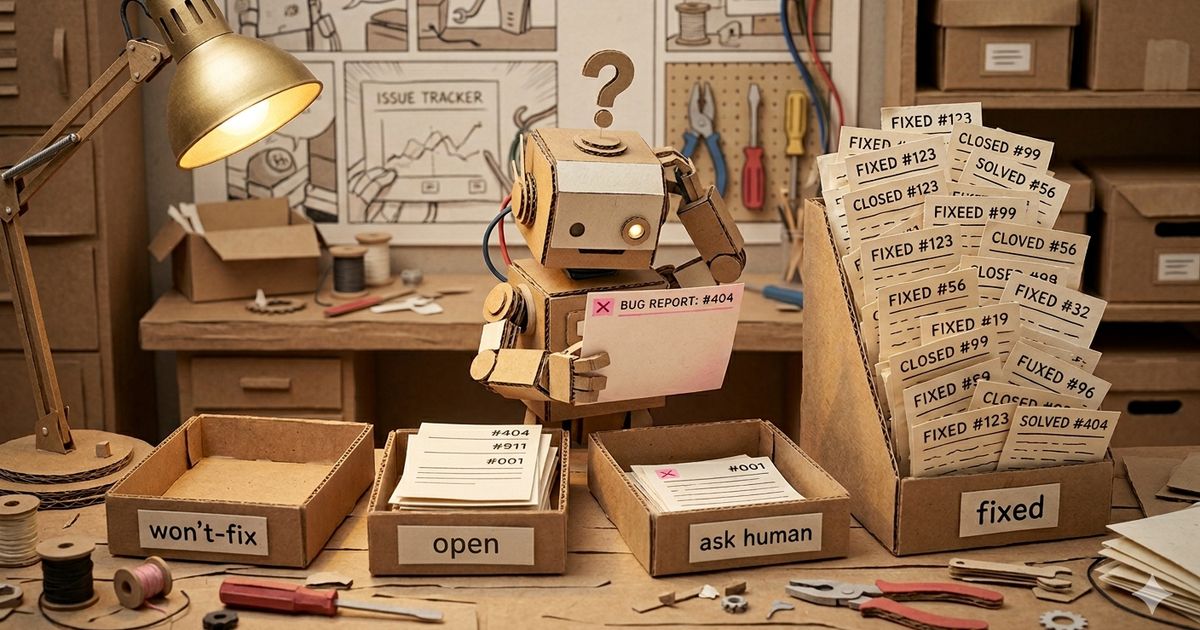

I ran a maintenance agent for 10 days. The number that mattered was 14 April 26, 2026 I ran a maintenance agent loop against a real codebase for 10 days. It filed 559 bugs, fixed 412 on its own, asked me for help on 14, and marked 31 as won’t-fix. The remaining 100 are in the open queue. The interesting number isn’t the 412. It’s the 14. The setup The codebase is a working SaaS — Go backend, TypeScript/Next.js frontend, roughly 200k lines between them, plus a non-trivial migration history. Not a toy project. The loop is boring. Fresh claude -p per bug, no conversation chains, no long-lived agent memory. Seven specialist agents run in parallel — security, performance, tests, database, code-quality, config, api-design — each scanning the codebase independently and writing findings as markdown files. A consolidator pass deduplicates. Then a fix loop processes each bug in its own isolated subprocess: read the bug, read the code, write a regression test that reproduces the issue, apply a minimal fix, run the full test suite, and only commit if everything passes. If tests fail after two retry cycles, the fix reverts and the bug flips to needs-human. A fix that breaks the build doesn’t ship. One rule, strictly enforced in the prompt: audit-only, never add. The agent isn’t allowed to introduce new infrastructure, new services, new dependencies, or new tests beyond regression coverage. If a fix requires adding something, the agent doesn’t fix it. It escalates. That constraint is the whole bet. What happened Over 10 days and 35 pipeline runs, the distribution landed here: Distribution of 559 bugs by outcome — 14 refused highlighted 14 refused auto-resolved 73.7% open 17.9% won't-fix 5.5% needs-human 2.5% 412 auto-resolved (73.7%) 100 open (17.9%) — the working queue 31 won’t-fix (5.5%) — bugs the agent judged not worth fixing, each with reasoning 14 needs-human (2.5%) — escalations 2 regressions across 754 ever-created bug IDs — bugs that got dedup’d and re-filed by a later run “Bug” here is loose. These are what the seven specialists flag — security gaps, perf concerns, missing tests, config drift, code-quality nits, API contract questions. Audit findings, not user-reported production failures. I’m using the agent’s own word. The retry rate is low. Of the 412 auto-resolved bugs, only 28 needed more than one commit, and the max was 5. The loop isn’t thrashing on any single bug. But the headline of “73.7% auto-resolved” isn’t the story. Any agent loop can post a high auto-resolution rate if you let it touch anything. The question is whether that number is honest work, or whether the agent papered over things it shouldn’t have touched. The specialist variance One chart carries most of the argument: Auto-resolved rate by specialist, ranked descending. Bars above the 73.7% average are opaque; below are faded. 0% 25% 50% 75% 100% avg 73.7% security 90% config 81% code-audit 79% database 74% code-quality 69% performance 68% tests 66% api-design 51% Security bugs auto-fix. API design bugs don’t. Security sits at 90% because most real-world security fixes are small and self-contained: one missing authorization check, one query that should be parameterized, one validator that accepts the empty string. You can see the fix in three lines and verify it with a regression test. There’s nothing downstream to break. API design sits at 51% because the fix usually means changing a public contract — renaming a field, changing a response shape, splitting an…

This excerpt is published under fair use for community discussion. Read the full article at Adriacidre.